Most failed projects collapse before launch. They go off track when the team moves from assumptions to scope before proving the problem is worth solving. That is why digital product development should be managed as a staged risk-control system. Analyses of startup failures regularly flag lack of market need as a common cause, while PMI found that nearly half of unsuccessful projects miss goals because of poor requirements management.

The efforts of internal teams and digital product development services from external contractors should be measured against the same operating standard at every stage: define the product clearly, identify likely failure points, set a validation method, and agree on the metrics that will show drift early. Google’s HEART framework fits this approach because it ties product goals to measurable user-centered signals before assumptions turn into roadmap commitments.

1. Problem Definition and Validation

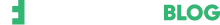

This product development process stage is about proving that the pain point is real, recurring, and expensive enough to justify a product. Teams fail here when they collect feature requests instead of evidence, or when they confuse internal enthusiasm with external demand. If the problem is weak, every later stage becomes an expensive exercise in polishing the wrong thing.

Validation is your decision gate. Before approving design or development, ask for a one-page brief that names the target user, the job to be done, the current workaround, and the commercial value of solving it. Then, require a small proof set:

- 10-15 interviews with the target role, not with friendly internal stakeholders;

- A ranked list of repeated pain points, with frequency and severity;

- One clear activation event that proves a new user reached the first value;

- One critical user task that must succeed in early testing.

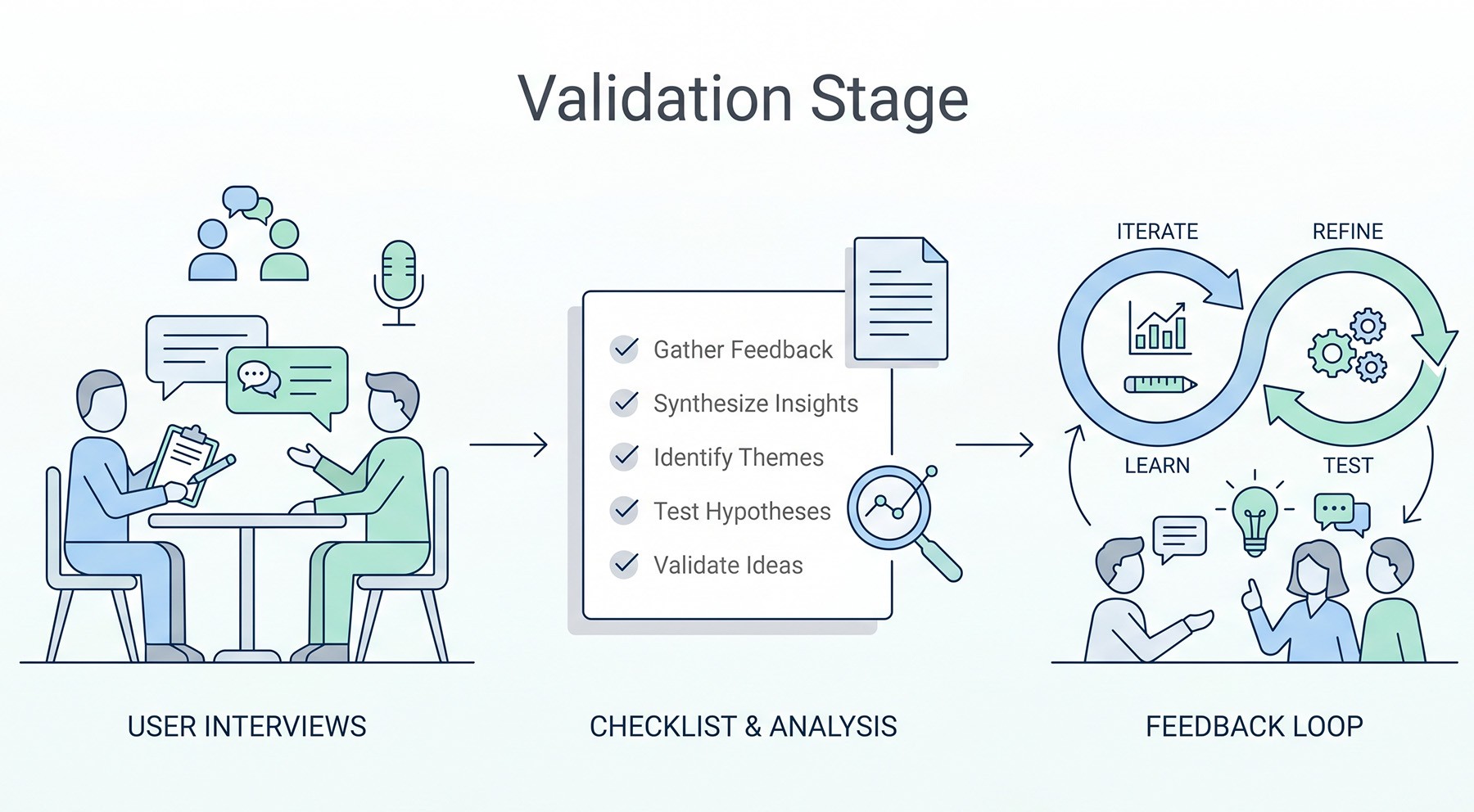

2. Requirements and Scope Control

This is the stage where a good idea usually gets damaged by vague language. Teams fail when they write requirements as ambitions, mix phase-one essentials with “nice to have” items, or allow unresolved decisions to stay hidden inside tickets. PMI found that nearly half of unsuccessful projects miss goals because of poor requirements management, and that 5.1% of project spending is wasted for the same reason. That makes scope discipline one of the highest-return controls in the entire product development process.

The practical fix is simple: convert every requirement into a testable statement with an owner, dependency, and acceptance rule. If a requirement cannot be verified, it is not ready for build. Review the scope weekly using three indicators:

- scope-change rate after kickoff;

- number of unresolved requirements older than seven days;

- percentage of sprint work tied to approved requirements.

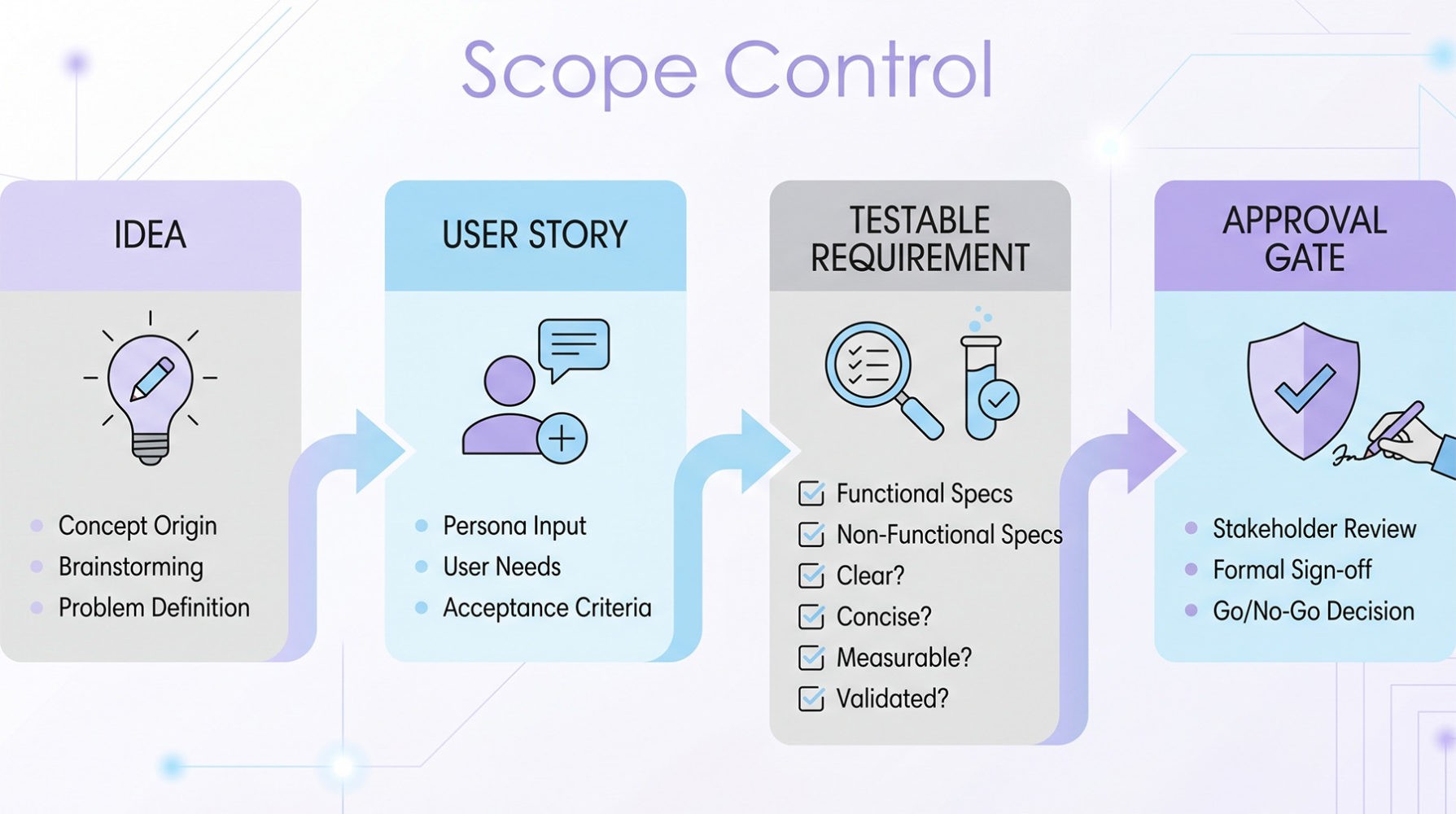

3. UX and Solution Design

This stage decides whether the product will be easy to understand under real conditions. Projects fail here when flows are designed around internal logic, edge cases are ignored, or teams approve wireframes without testing the main journey. A strong digital product development company should treat design as a measurable operating layer.

The safest way to work is to test early and keep the sample small enough to move fast. Nielsen Norman Group recommends frequent small usability tests with 4–5 users because that format produces actionable insight quickly. For each critical flow, track:

- task success rate;

- time on task;

- error rate;

- point of abandonment by step;

- post-task ease score or short qualitative feedback.

If task success is weak, do not move the problem into engineering and hope that digital product development services will hide it. Redesign the flow first. Google’s HEART model is useful here because it forces the team to link UX decisions to real user outcomes such as task success, engagement, and perceived quality.

4. Development and Delivery

This is where many teams mistake motion for progress. Features may be shipping, but the product is still failing if releases are slow, unstable, or impossible to predict. The most reliable way to manage this stage in digital product development is to track delivery speed and delivery stability together.

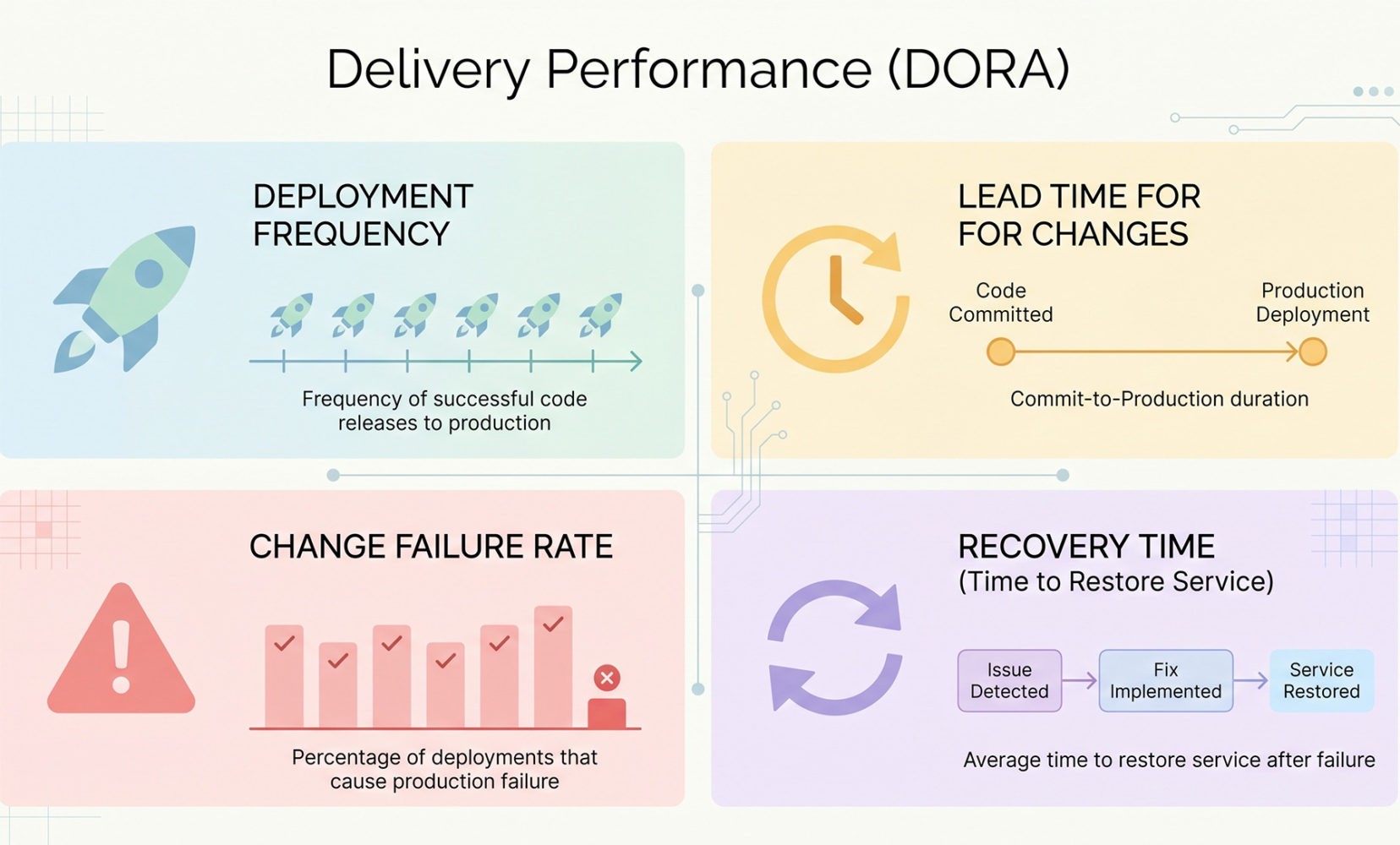

Google Cloud’s DORA research identifies four delivery metrics that matter across software teams: deployment frequency, lead time for changes, change failure rate, and time to restore service.

5. QA and Release Readiness

Testing should answer one question: what can still hurt users or revenue if we ship this version now? Teams fail here when QA starts too late, test coverage follows tickets instead of business risk, or launch approval is based on “no obvious issues” rather than explicit release criteria.

A useful release gate should be short and binary. Before launch, require the digital product development company to show:

- Zero open critical defects;

- A tested rollback path;

- Monitoring in place for the main user journey;

- Confirmed analytics events for activation, conversion, and failure states;

- Named owners for post-launch incident response.

6. Launch, Learning, and Post-Launch Correction

Launch is where the product starts generating truth. Projects fail at this stage when teams treat release as the finish line, ignore behavioral data, or wait too long to decide whether onboarding, pricing, or feature adoption is weak. The best digital product development programs assume that the first release is a learning instrument, not a final answer.

In the first 30 days, focus on leading indicators. Activation rate matters because it measures the share of new users who reach the first meaningful value milestone within a defined timeframe.

Ask for a weekly launch review built around these numbers:

- Activation rate by cohort;

- Task success rate for the main workflow;

- Retention at the first meaningful interval for your product;

- Support-ticket volume by issue type;

- Time to detect and time to resolve production incidents.

If activation is weak, fix onboarding before adding features. If support tickets cluster around one flow, simplify that flow before expanding the scope. If retention stalls after initial use, the team has a value-delivery problem. That is how leaders keep digital product development practical: stage by stage, metric by metric, with each decision tied to a visible risk and a visible correction path.